Three men, three short stories, one fire axe, and the most accurate prediction of the AI age ever published in a weekly British comic.

For a man who has never positioned himself as a visionary, John Wagner has an awkward habit of being right about things long before they happen, and The Art of Kenny Who? is the example that ought to make every working artist in 2026 stop what they are doing and read it cover to cover. He would deny the soothsayer label flatly, probably accuse you of softening him up for a free pint, and then steer the conversation somewhere drier, but the evidence keeps stacking up regardless. In 1986, in three short episodes of a weekly British comic written with Alan Grant and drawn by Cam Kennedy, he produced something that reads now less like satire and more like a script for the crisis the creative industries are presently failing to navigate. It is not, by his own standards, one of his best, which is the part that troubles me most. The fact that he almost casually predicted forty years of industrial collapse in a piece of work he probably barely remembers writing makes the accuracy worse rather than better.

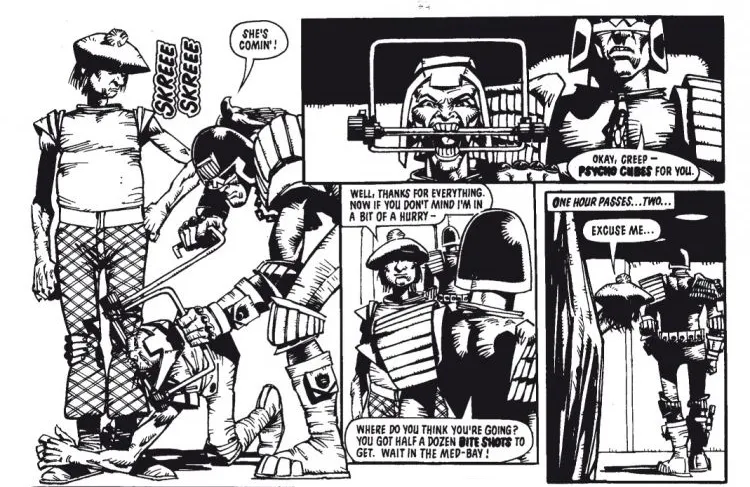

A young artist from the Caledonian Hab Zone, the future Scotland of Mega-City One’s twenty-first century, arrives in the big city with a portfolio under his arm. His name is Kenny Who?, the question mark part of the name, a private joke we will come back to in a moment. He has come south to pitch his work to Big 1, the city’s biggest trashzine, and he is the kind of artist anyone who has ever sat in a creative meeting will recognise instantly. He is naive. He is eager. He is convinced that the work will speak for itself, that if he can just get his portfolio in front of the right editor everything will fall into place, that the years of unpaid practice in the Cal-Hab tenement where he learned to draw will at last be acknowledged. Kennedy draws him with a kind of helpless dignity, a short man in his late thirties with red hair and earnest blue eyes, clutching a folder full of work he believes will change his life. The reader knows what is coming. Kenny does not.

He walks into the Big 1 offices. He hands his portfolio to the receptionist. She tells him to wait. He waits. He is eventually shown into the office of a senior editor, a fat, expensive-looking man behind a desk that costs more than Kenny will earn in his lifetime, and the editor flicks through the portfolio for perhaps thirty seconds. The verdict is brisk. The work does not cut the synthi-mustard. The style is not what Big 1 is looking for. Kenny is shown the door. He stands in the corridor for a long moment, his portfolio back in his hand, the rejection ringing in his ears, and then he does what working-class Scots have done for centuries when life has just kicked them in the teeth. He goes to find a pub.

He sits at the bar with a drink he cannot really afford, staring at the wood grain in front of him, trying to absorb the failure. The television above the counter is showing adverts, the usual flicker of consumer noise nobody pays any real attention to, and at some point Kenny looks up. What he sees on the screen is his own artwork. His linework. His colour. His style. A Big 1 advertisement, professionally produced, broadcast across Mega-City One, drawn unmistakably in the hand of the man sitting at the bar staring up at it. Kennedy gives this moment its own panel, an artist looking at his own work and not recognising the world he is standing inside, and it is one of the cleanest pieces of cartooning in the strip. The pint in front of him goes cold. The advert plays out. The slogan flashes. Nobody else in the bar notices anything is wrong.

Kenny goes back to the Big 1 offices. He demands to see the editor. He demands an explanation. The editor, when he finally agrees to receive him, gives one with the patient air of a man explaining a perfectly reasonable business arrangement to a slow child. In Mega-City One, in the twenty-first century, the editor tells him, human artists are no longer required. The machines can imitate any style perfectly. They do not need wages, holidays, contracts, sleep, or food. They never miss a deadline. They never argue about scripts. They never come into the office demanding to know where their royalties are. The machines have been producing comics in Kenny’s style for some time now, the editor explains, and the work has been performing extremely well in the trashzine market. Kenny’s art was already on the wall before he walked in the door, sold under Big 1‘s name, drawn by no one, and the editor seems genuinely puzzled that Kenny is upset about any of this. We didn’t steal your work, the editor says, with the bright reasonableness of someone who has rehearsed the line many times. We recreated it. Artists have been doing that since the dawn of time.

This is the line. This is the entire parable in a single piece of dialogue, set down by Wagner in 1986 and printed on cheap paper in a weekly British comic, and you can read it back now in the press release of any generative AI company you care to name. The argument has not changed in forty years. The line about recreation rather than theft. The casual appeal to art history as cover. The implicit suggestion that the artist objecting to the use of his own style is the unreasonable one in the conversation. The editor at Big 1 is not a science-fiction monster. The editor at Big 1 is a press release, a legal brief, a podcast interview with a tech founder, an op-ed in a serious magazine, a generation of comments under any artist’s social media post about generative AI. The dialogue Wagner gave him is the dialogue we have been receiving from the people building the machines that scrape our work without our consent. We didn’t steal it. We recreated it. It was wrong in 1986 and it is wrong in 2026, and the precision with which Wagner identified the lie before the technology even existed to tell it is the part of this strip that should keep every working artist awake at night.

Kenny does what any of us would do in that bar, with that television above the counter, and that bewildered hot rage rising in his chest. He goes back to the Big 1 offices, finds the machine that has been drawing in his style, and takes a fire axe to it. He smashes it to pieces. He destroys the chrome housings, the visible solenoids, the spindled mechanisms Kennedy drew with such loving comic-book exaggeration, and he does it with the kind of fury only an artist who has watched his own work being stolen in front of him can summon. The senior editor immediately phones the Judges. He reports, in the bleakest line of the whole strip, a murder of a great talent, by which he means the destruction of the equipment, not the slow erasure of the man holding the axe. The Judges arrive. Kenny is arrested for criminal damage. The machines, the ones that produce work in his style without his consent and without his payment, are characterised by the law as the victims. Kenny is sentenced to the iso-cubes, where he will sit for the duration of the next story while the work that bears his style continues to roll off the production line in the next room.

That is the first story. Wagner and Grant come back to the character in 1990, in the opening issues of the new Judge Dredd Megazine, in a sequel called Beyond Our Kenny that takes the parable somewhere even sharper. Kenny’s family travel south from the Cal-Hab to plead for his release. His wife is the wounded party, a woman who has watched her husband ruined by an industry that took his work and gave him nothing in return, and she sits in the offices of Big 1 and tries to make the case for him on the only ground she can think to stand on. She tells the editor that her husband is the creator. She tells him that Big 1 used his style without his agreement. She tells him that her husband has rights, that the work was his, that as the person who made the thing he ought to have some say in what is done with it. She is using the language we have all been taught to use when we talk about this. The language of authorship, of creation, of consent, of attribution, of the basic moral fact that the person who made a thing has some claim on it.

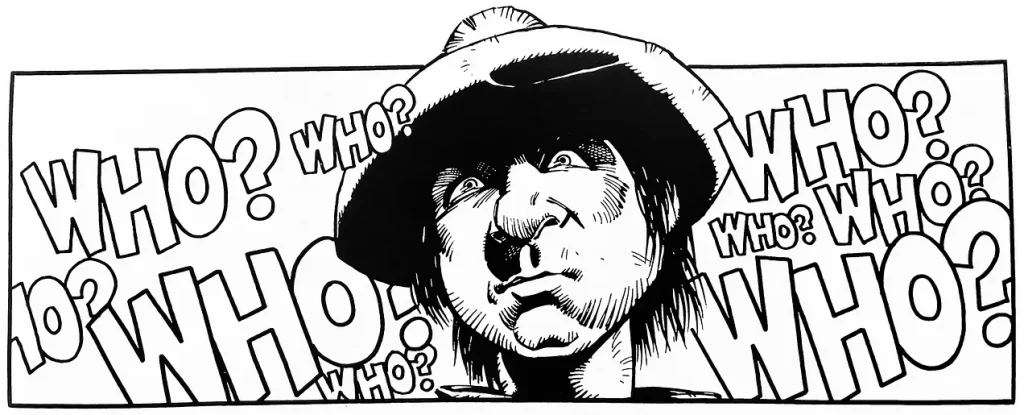

The editor pauses. He holds her gaze for a beat. Then he turns in his chair and opens the door to the adjoining office, where a row of grey men sit beside their mechanical drawing boxes producing the work that has stolen her husband’s career, and he calls through to them, cheerfully, Hey guys, get this, creators’ rights!

The entire room erupts. The laughter goes on for a panel and a half. Kennedy drew it with such care that you can almost hear it through the page, a wall of male hilarity, executives and middle managers and the men who programme the machines all laughing together at the suggestion that a person who made a thing has any right to it. The grey men in the next room laugh too. They are in on the joke. They have always been in on the joke. The wounded woman sits in her chair while a publishing company laughs at the suggestion that her husband’s creative work is his own, and Wagner and Grant put her there to make us watch it happen. They wanted us to see the face of the institution. They wanted us to hear the laughter. They wanted us to understand that the people who treat creative work as raw material do not consider the question of rights to be a serious question. They consider it a punchline.

This is the second piece of dialogue that should be printed in every working artist’s studio. Hey guys, get this, creators’ rights. It is not a hypothetical. It is the actual register, the actual tone, in which the question of creator consent is treated in every boardroom where the decision is made to train an algorithm on a body of work without the permission of the people who made it. The laughter is real. The laughter has been real all along. Wagner and Grant did not invent it for the strip. They put on the page what they had been hearing in the meetings, what every artist of their generation had been hearing in the meetings, what creators have been hearing in meetings since the comics industry first realised it could pay its artists a flat fee and keep the rest. The laughter at creators’ rights is the soundtrack of the industry. Kenny Who? simply made it visible.

Wagner and Grant then do something with the rest of Beyond Our Kenny that is the part of the strip nobody who cites it in passing ever seems to mention, and it is the part of the strip that ought to make any present-day tech evangelist deeply uncomfortable. The grey men in the next room keep producing their machine-drawn comics. A rival publisher, watching the saturated market, makes a gamble. They release a comic that is deliberately, ostentatiously, drawn by a human, with all the visible imperfections of an actual hand, the smudges and the off-register colour and the slight inconsistencies that no machine would ever produce. The market responds immediately. The slop loses its commodity value the instant readers discover that human-made work is the rarer product. Sales of the human-drawn comic explode. The publishers in their boardrooms, the same men who were laughing at creators’ rights a few panels ago, are now urgently demanding that Kenny be released from the iso-cubes because their competitors are eating their lunch with hand-drawn work, and they need a human artist they can put on the cover. The work that bore Kenny’s stolen style for months becomes worthless overnight. Scarcity creates premium. Premium creates margin. The publishers do not have any moral conversion. They have a commercial problem, and the solution is to remember, suddenly and loudly, that the human hand exists and might be worth something.

Wagner and Grant come back to Kenny one final time, in 2005, in a Megazine story called Who? Dares Wins. By this point Kenny is back in the Cal-Hab, in the blighted northern reaches of a country the future has forgotten, and he is still drawing. He is still working. He is still refusing to stop. What he has made now is a trashzine of his own, a strip he writes and draws and prints himself, starring a character called The Hoolie, a working-class hero who fights corrupt judges led by a recognisable thug named Judge Dread. The pages are crude by the standards of Big Meg production, hand-drawn and badly printed, none of the polished synthetic gloss the machines turn out in their thousands, and that is precisely why they catch on. The Hoolie becomes a sensation. The trashzine sells out of every black-market stall it reaches, passed hand to hand between readers who have grown sick of identical machine-generated dreck, and Kenny finds himself, for the first time in his life, commercially successful on his own terms. The Judges arrest him for defamation. He is dragged back to Mega-City One to face a sentence, defended in an appeal by Public Defender 314, his conviction overturned on technicalities, and he returns home to Cal-Hab to keep drawing. The work made by a person, sold by a person, refusing to disappear into the production line, will always find an audience if the audience is allowed to find it.

It helps to understand why the three of them were able to see all of this so clearly, and the answer is that they were not predicting anything. They were testifying. 1986 was the moment British comics broke open and the conditions that produced Kenny Who? were already burning around the men who made it. DC Comics had crossed the Atlantic with chequebooks raised and was hoovering up the talent that 2000AD had spent a decade training. The publishers in London, IPC and Fleetway, were being forced to look at their own contracts for the first time and discovering that the artists they had been treating as interchangeable hands had developed enough international leverage to walk out the door entirely. The talk in the convention bars, in the offices, in private letters and in increasingly public statements was about royalties, ownership, residuals, and the basic question of whether a creator owned anything of what they had made. Somewhere around that period John Wagner is said to have walked into the group editor’s office, dumped a heap of Judge Dredd merchandise on the desk, and observed flatly that he did not see a penny from any of it. He was not asking. He was reporting a condition. That same year, 2000AD hit prog 500, and the milestone was supposed to be a celebration. The editorial team invited the core creators to each contribute a page, and what they got instead was an inventory of grievances so candid that two of those pages, Mick McMahon’s and Brian Bolland’s, had to be redrawn before the issue could ship. Bolland was furious about the lack of royalties. McMahon characterised the artists tracing his style as bloodsuckers. The writers were angry. The artists were angrier. None of them had a union or a bargaining apparatus or any legal protection worth the name. What they had was the page, and so they used it, which is precisely what artists do when they have no other leverage; they put the protest into the craft, they make the work argue for them, and they trust that the work will outlive the meeting room where their grievances were ignored.

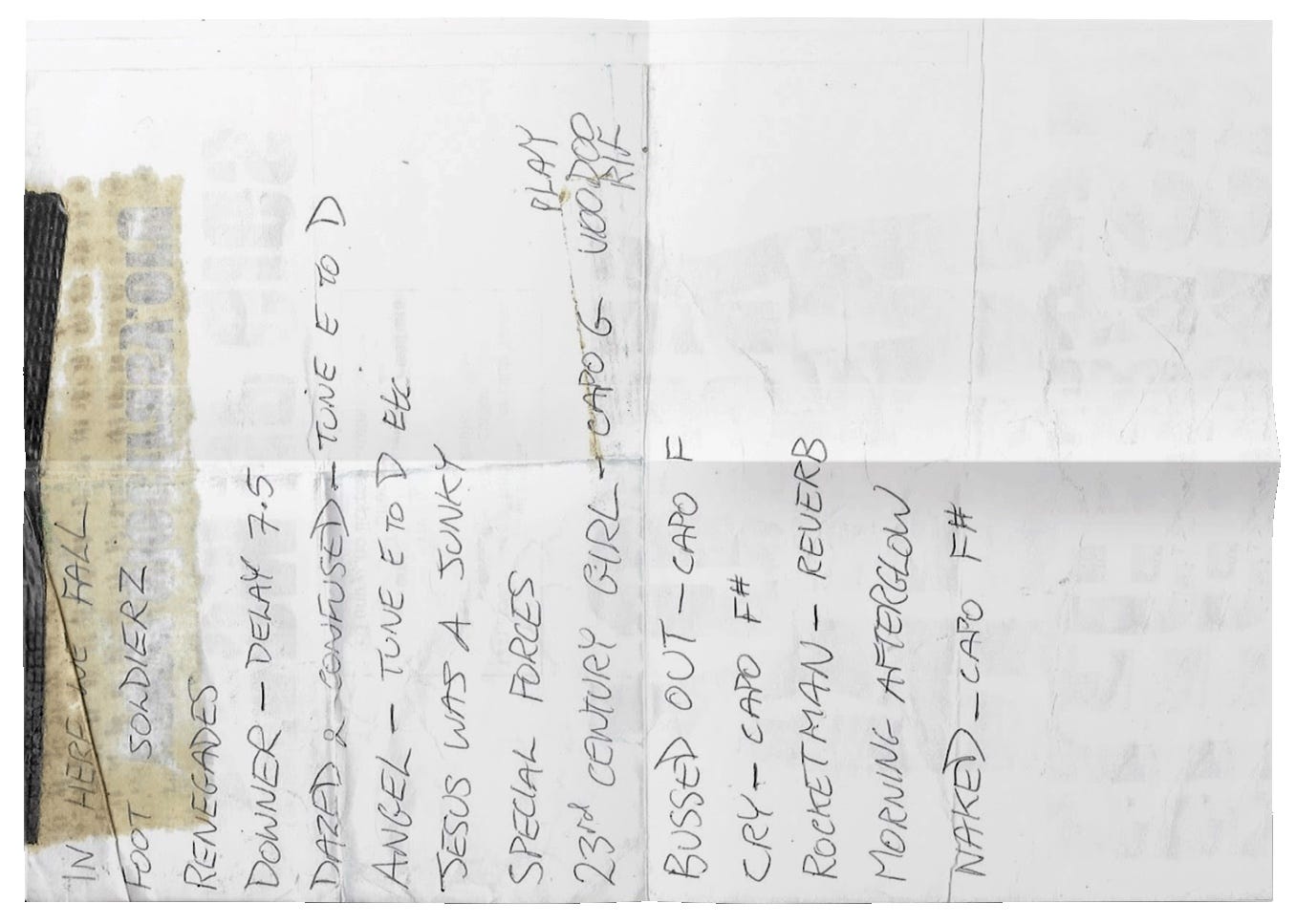

The title of the strip is a private joke with a sharp edge, and the edge cuts in real life. Kennedy had flown south from the Orkney Isles, where he then lived and still lives, to meet Wagner for the first time to discuss a creator-owned project they were trying to pitch to DC, a strip called Outcasts, and despite the fact that Kennedy was by then one of the most distinctive artists in British comics he had been trying to reach the DC offices in New York by telephone with no success. After roughly the hundredth attempt to get through, the story goes, the switchboard simply replied Kenny who? The full name had evaporated inside a New York receiver, a Scottish artist erased by a casual American mispronunciation, and the dismissal stayed with Wagner long enough to become the name of a character. Cam, working at the drawing board on the strip that grew out of that humiliation, took the joke further than the title. The character himself looks like Kennedy, drawn by Kennedy, the artist quietly painting himself into the parable as the man whose style was being replicated. Then he drew Wagner into it as well, sitting at the bar as one of the local drunks while Kenny stares up at his own stolen artwork on the television. The boardroom figures at Big 1 bear a deliberate and recognisable resemblance to the actual management at IPC. The whole strip is a roman-à-clef drawn so casually that most readers, then and since, have missed how much of it is on the record.

That is the parable, in three movements, written across nineteen years by men who were not trying to be prophets and could not have known they were doing it. Wagner, Grant and Kennedy were not writing science fiction in any predictive sense, because there were no large language models in 1986 or 1990 or 2005, no diffusion architectures, no scraped datasets, no synthetic images, and Kennedy’s machines were drawn with chrome housings and visible solenoids that look quaint now in a way the script around them absolutely does not. The prescience is colder than science fiction. What the three of them identified was not the technology but the underlying logic of the industry that would one day welcome it, the appetite that drove the boardrooms, the patience the publishers had for human labour only while no cheaper option existed, the laughter at the suggestion that a person who made a thing had any claim to it. They saw that the moment a machine could be trained to imitate a hand, the hand would be removed. They saw that the moment the market noticed it had been sold slop, the human hand would be quietly reinstated, not because of any moral correction but because scarcity creates premium and premium creates margin. They saw that the artist, refused by the system and prosecuted for objecting, would have only one path left open. He would go home. He would draw anyway. He would print it himself, sell it himself, build the audience himself, and outlast the machinery that had tried to make him obsolete.

The strip has been sitting in plain sight the whole time. In the Judge Dredd: The Art of Kenny Who? collection on the shelves of comic shops. In the 2000AD archive. In the Ultimate Collection partwork. Anyone could have read it. Most of us did. We laughed at the gags about Highland accents and the Man Bites Jock cover, admired Kennedy’s bubbly architecture and his offbeat colour, recognised Wagner’s writing somewhere underneath, and went home satisfied that we had spent twenty minutes inside a clever piece of mid-eighties satire. We did not think it was about us. We thought it was about an American editor in the 1980s who did not know who Cam Kennedy was.

It was about all of us. It just had to wait for the world to catch up.

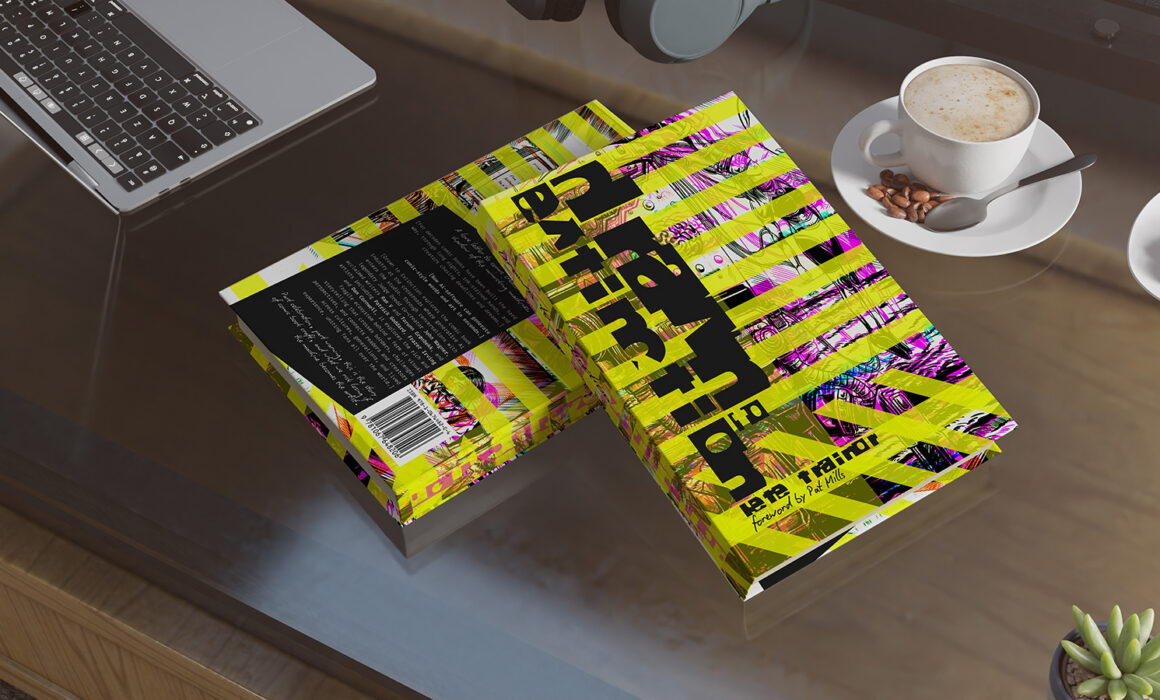

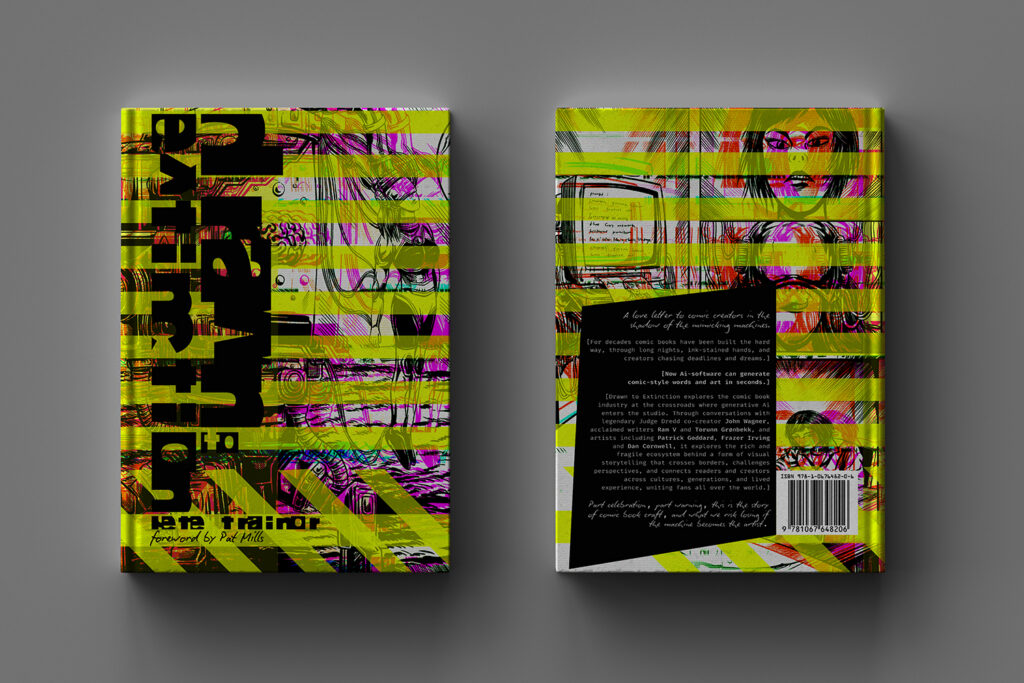

Drawn to Extinction is available now with contributions from John Wagner, Pat Mills, Patrick Goddard and many others.